The Burgess Shale preserves the evidence of a moment when life experimented wildly with body plans, most of which the subsequent history of the planet quietly eliminated. One observes, with some geological patience, a similar moment unfolding in artificial intelligence.

Stephen Jay Gould’s Wonderful Life — his 1989 account of the Burgess Shale and the nature of history — made an argument that remains contested in paleontology but resonant far beyond it: that the Cambrian explosion was not merely a prolific period of evolution but a period of genuinely radical morphological experimentation, in which body plans were tried and tested that bear no resemblance to any living organism, plans that were eliminated not through any inherent inferiority but through what Gould called the “lottery of life” — the contingent, unpredictable churning of mass extinction and recovery that left some lineages standing and others extinct regardless of their intrinsic qualities. The survivors were not necessarily the best. They were the ones that happened to be standing when the selection pressure lifted.

The AI researcher François Chollet — whose work on measuring intelligence has been as rigorous as it is provocative — has explicitly invoked this analogy in discussing the current proliferation of foundation model architectures. The comparison is not casual. Between 2020 and 2025, the number of distinct architectural approaches to foundation model construction expanded at a rate without precedent in the short history of the field.

The Taxonomy of the Cambrian AI

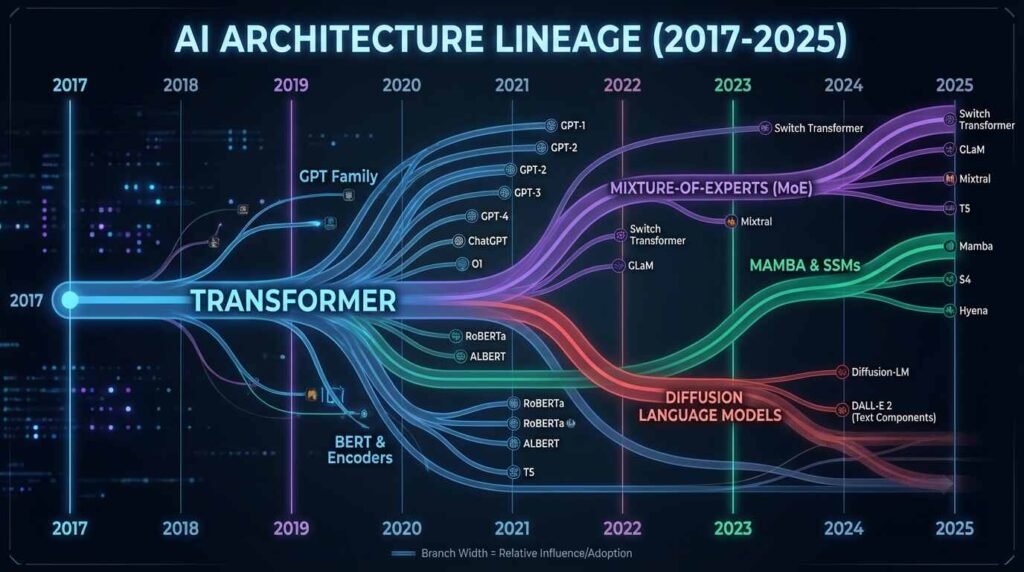

The dominant body plan of the current AI Cambrian is the transformer — the architecture introduced by Vaswani et al. in 2017’s “Attention Is All You Need,” which subsequently proved so adaptable that it colonized language modeling, image generation, protein structure prediction, and reinforcement learning simultaneously. The transformer is the AI equivalent of the bilateral symmetry body plan: a generalized architecture of such broad utility that it appears in environments its originators would not have anticipated.

But the period since 2020 has not been merely a period of transformer refinement. It has been a genuine explosion of alternative architectures, each representing a distinct hypothesis about what the fundamental computational structure of intelligence should be. Albert Gu and Tri Dao’s 2023 Mamba architecture — a state space model offering linear-time sequence processing rather than the quadratic scaling that constrains transformers on long contexts — represents a genuine morphological departure. It processes sequences not through the mutual attention of all tokens to all other tokens but through a selective state mechanism that determines, dynamically, what information to retain and what to discard. This is a different body plan, not a variant on an existing one.

Mistral AI’s mixture-of-experts approach represents another distinct architectural hypothesis: that a model need not engage all its parameters for every token, but should route each computational problem to a specialized subset of experts selected dynamically from a larger population. This is, in biological terms, a colonial organism — a superstructure composed of specialized sub-agents, each evolved for particular tasks, activated in concert for particular problems.

Gould’s Lottery and the Contingency of Survival

Gould’s most provocative argument in Wonderful Life was that the survivors of the Cambrian extinction events were not selected on the basis of architectural superiority. Pikaia — a small, unprepossessing chordate discovered in the Burgess Shale, now recognized as among the earliest ancestors of the vertebrate lineage — did not survive because its chordate body plan was demonstrably superior to the radically different plans of Anomalocaris or Opabinia. It survived, Gould argued, through something closer to historical accident — it was in the right place, at the right population density, with the right generalized flexibility to exploit conditions after the mass extinction that the more spectacular and specialized Cambrian experiments could not.

Applied to the current AI Cambrian, this argument carries significant implications. The architecture that comes to dominate the field over the next decade may not be the one whose fundamental design is most theoretically elegant, most computationally efficient, or most biologically plausible. It may be the one with the largest ecosystem of supporting tools, the deepest investment from the institutions with the resources to train it at scale, and the most extensive deployment infrastructure — the equivalent of Gould’s Pikaia: generalized, somewhat unremarkable, and present in sufficient numbers when the market equivalent of a mass extinction event reorganizes the competitive landscape.

The Anomalocaris of AI: Spectacular but Terminal

The Anomalocaris was the apex predator of the Cambrian — large, mobile, equipped with elaborate grasping appendages, the most formidable organism of its era. It has no living descendants. Its body plan, however well-adapted to the Cambrian ecosystem, did not persist through the subsequent extinctions and reorganizations of the marine fauna. It was spectacular and terminal.

In the current AI landscape, one observes certain architectures that fit this characterization: technically impressive achievements that may prove to be evolutionary dead ends. Diffusion models applied to language — a genuine architectural novelty, processing tokens through a denoising process rather than autoregressive generation — demonstrated capabilities in specific domains but have not, as of the current period, displaced the transformer in the general-purpose language modeling niche. Symbolic AI hybrids — systems attempting to combine neural network learning with explicit logical reasoning — have shown promise in constrained environments but have repeatedly failed to scale with the ease that pure neural approaches have demonstrated.

These may be the Anomalocaris lineages: architecturally sophisticated, interesting to study, but unlikely to represent the end-state of the technology. Their failure, if it comes, will not be evidence that they were poorly designed; it will be evidence that the selective environment of the current AI ecosystem did not favor what they offered, in the same way that the post-Cambrian ocean did not favor what the great predator of the Burgess Shale offered.

Geoffrey Hinton, Yann LeCun, and Competing Evolutionary Predictions

The disagreement between Geoffrey Hinton and Yann LeCun about the architectural future of AI is, in the terms of this analysis, a disagreement between two paleontologists looking at the Cambrian fauna and reaching different conclusions about which lineages will persist.

Hinton — who has publicly expressed concern about the trajectory of the field he helped create — has suggested that the transformer architecture and its descendants are likely to remain dominant, largely because the infrastructure and investment required to train them at scale represent a barrier to displacement that no alternative has yet demonstrated the ability to surmount. This is an argument from ecological incumbency: the existing body plan is not optimal, but it is established, and establishment is itself a form of fitness in a world where training infrastructure, deployment tooling, and research talent constitute the selective environment.

LeCun’s competing prediction — that world-model architectures, systems that build internal representations of physical and causal reality rather than predicting the next token in a sequence — represent the lineage most likely to dominate intelligence at human scale — is an argument from adaptive advantage: that the current dominant architecture is achieving impressive results on a task that is not, in LeCun’s assessment, the task that matters for general intelligence. This is the equivalent of arguing, in the Cambrian context, that the filter-feeding body plans would ultimately prove more durable than the active predators because the ecological niche of filter feeding is more stable than the ecological niche of apex predation.

Both predictions may be correct. Ecological transitions frequently produce not a single winner but a reorganized landscape in which different niches are occupied by different successful lineages. The vertebrates that descended from Pikaia did not eliminate arthropods; the insects and crustaceans, descended from other Cambrian lineages, constitute the most numerically dominant animal groups on the planet. The AI equivalent of this outcome — a reorganized landscape with transformers occupying some niches, Mamba-class state space models occupying others, and world-model architectures establishing themselves in domains that current architectures cannot reach — may prove more accurate than any single-winner prediction.

What the Ecological Niche Determines

The review here of Darwin Among the Machines by George Dyson is worth recalling in this context: Dyson’s argument that the evolution of computation was already following biological patterns long before AI researchers began explicitly invoking evolutionary terminology. The niche concept — the specific ecological role that determines which adaptations are advantageous — is the key analytical tool for predicting which AI architectures will consolidate around.

The niches in the current AI ecosystem are defined by deployment constraints: latency requirements, context length demands, compute budgets, privacy requirements, edge versus cloud deployment. An architecture that performs superbly at the center of a data center may be unfit for edge deployment; an architecture optimized for long-context reasoning may be overbuilt for the conversational applications that constitute the majority of current commercial use cases. These ecological boundaries will likely determine the boundaries of the surviving lineages more than any intrinsic architectural superiority.

Gould’s famous conclusion in Wonderful Life was that the history of life, replayed from the Cambrian, would not necessarily produce humans — that the survivors were contingent on historical accident rather than predetermined by inherent superiority. Applied to AI: replay the development of foundation models from 2017 with the same starting conditions but different institutional investments, different research communities, different timing of key results, and the dominant architecture of 2035 might well be different from what the current trajectory suggests. The Cambrian is not a deterministic process. Neither is the one we are currently inside.

You Might Also Like

The World Models Revolution: Why AI’s Smartest Minds Are Betting Billions on 3D Intelligence

Sources

- Albert Gu & Tri Dao, “Mamba: Linear-Time Sequence Modeling with Selective State Spaces,” arXiv (2023): https://arxiv.org/abs/2312.00752

- Mistral AI mixture-of-experts technical documentation (2023): https://mistral.ai/news/mixtral-of-experts/

- Stephen Jay Gould, Wonderful Life: The Burgess Shale and the Nature of History (W.W. Norton, 1989)

- François Chollet, ARC Prize documentation and public commentary: https://arcprize.org/

- Vaswani et al., “Attention Is All You Need,” NeurIPS (2017): https://arxiv.org/abs/1706.03762