Nobody told you that understanding AI would be this complicated. Or this important. Or that ignoring it would cost you something real — not just professionally, but in the basic sense of knowing what kind of world your kids are growing up in.

The problem isn’t intelligence. The people I talk to every day at the diner — contractors, nurses, teachers, small business owners — are sharp. The problem is that AI, science, and technology get presented as three separate subjects, each with its own vocabulary, its own experts, its own barrier to entry. You read a headline about large language models and then another about CRISPR and another about SpaceX’s Starship and you’re supposed to file them in different mental drawers labeled “tech,” “science,” and “future stuff.”

That framing is wrong. These aren’t separate stories. They’re one story. One long, continuous human project that started when the first scientist asked what something was made of and the first engineer asked what it could be made to do. AI did not arrive from Mars. It grew out of the same intellectual soil as evolutionary biology, statistical physics, and the space program. Understanding that lineage doesn’t just make you more informed — it makes the whole picture less frightening.

This is that guide.

What Science Actually Is — and Why It Keeps Producing Surprises

Start at the foundation. Science is not a collection of facts. It is a method for testing ideas against reality and updating your beliefs when reality pushes back. That sounds obvious until you watch how badly people misuse the word — “the science says” deployed like a verdict, when what science actually produces is a provisional best answer subject to revision.

Karl Popper formalized this in what he called the demarcation problem: the line between science and everything else is falsifiability. A claim is scientific if it can, in principle, be proven wrong. Newtonian mechanics was science. When Einstein showed it broke down at extreme velocities, physics didn’t collapse — it expanded. That’s the mechanism. The Demarcation Problem: Karl Popper, Falsifiability, and the Boundary Between Science and Pseudoscience is worth reading if you want to understand why this distinction matters more right now than it has in decades, in an era when AI-generated content and pseudoscientific claims can spread faster than corrections.

The surprises science keeps producing aren’t failures of the method. They’re evidence it’s working. Consider the Boltzmann Brain paradox: statistical thermodynamics — the same physics that describes your refrigerator — produces, when pushed to its logical extreme, the deeply unsettling prediction that a fully-formed conscious mind is more likely to fluctuate into existence randomly than to arise through billions of years of evolution. The universe, taken seriously, is weirder than anything science fiction writers have invented. The Fermi Paradox goes further: given the age and size of the cosmos, intelligent life should be everywhere. The silence might mean there’s a Great Filter ahead of us rather than behind us. These aren’t fringe ideas. They’re mainstream physics and astrophysics asking questions that don’t yet have answers.

This is the intellectual environment in which AI was born. Not in a vacuum. Not as a product feature. As the latest experiment in a species-wide investigation into the nature of mind, matter, and information.

The Biological Roots of Artificial Intelligence

You cannot understand AI without understanding Darwin. Not metaphorically — literally.

The neural networks that power modern AI are modeled, at a structural level, on biological brains. The training process — exposing a model to massive amounts of data and adjusting its parameters based on whether its outputs are right or wrong — is a computational version of natural selection. Variants that produce good outputs survive. Variants that produce bad ones don’t.

Richard Dawkins extended Darwin’s logic beyond organisms in The Selfish Gene and The Extended Phenotype: genes build structures in the world — not just bodies, but beaver dams, spider webs, cuckoo nests — that serve genetic replication. The Extended Phenotype: How Your Genes Build Structures Beyond Your Body explores this in detail. The implication for AI: intelligence itself might be a kind of extended phenotype — a structure the human genome builds to propagate itself, and one that may eventually build structures of its own.

Evolution also gave us horizontal gene transfer — the ability of organisms to swap genetic material sideways across species, not just vertically through reproduction. Darwin’s tree of life is, at the microbial level, more of a tangled web. Modern AI training has an analog: models can be fine-tuned on the outputs of other models, knowledge transferred laterally, capabilities shared and recombined. The biological metaphors aren’t decorative. They’re structurally accurate.

The deepest connection is this: both evolution and AI produce emergent complexity that nobody designed. Evolution didn’t plan the eye. It stumbled into it through variation and selection over geological time. AI systems develop emergent properties — capabilities that nobody programmed — when trained at sufficient scale. A model trained to predict text learns geometry. A model trained on code develops reasoning abilities. The system surprises its creators. Darwin would recognize the dynamic immediately.

What AI Actually Is — Stripped of the Hype

Large language models — the technology behind ChatGPT, Claude, Gemini, and their competitors — are prediction engines. Given a sequence of words, they predict what word comes next, millions of times, until a coherent response emerges. That’s the mechanism. What makes it powerful is the training data: essentially the entire written output of human civilization, compressed into billions of numerical parameters that encode patterns of meaning, logic, and language.

The result is not intelligence in the way your brain is intelligent. It has no continuous experience, no memory between conversations by default, no body, no survival instinct. But it has something extraordinary: access to the distilled pattern-structure of everything humans have written, and the ability to synthesize across it at a speed no human can match.

DeepSeek’s emergence in early 2026 proved that this technology is not a monopoly of American capital. A Chinese lab produced a model competitive with the best American systems at a fraction of the cost, shaking every assumption about how much compute and money were required. That’s important not just as a geopolitical data point — it’s evidence that the AI race is accelerating in ways the incumbents didn’t anticipate. Open source vs. Big Tech is now one of the defining battles in technology, with real consequences for who controls the tools reshaping civilization.

The shift from AI as a tool you use to AI as an agent that acts on your behalf is the current frontier. The Rise of AI Agents documents the transition from chatbots — which respond when prompted — to autonomous systems that can plan, execute multi-step tasks, browse the web, write and run code, and operate inside software ecosystems without a human approving each step. The Future of Agentic AI examines where this leads. The short answer: systems that don’t wait to be asked.

Agent swarms take this further. Multiple AI agents, each with a specialized function, collaborating on problems too complex for any single system. The analogy to ant colonies, to immune systems, to markets — collective intelligence without central command — is not accidental. It’s the same architecture that evolution discovered works. Frameworks like LangGraph, CrewAI, and AutoGen are building the infrastructure for this now.

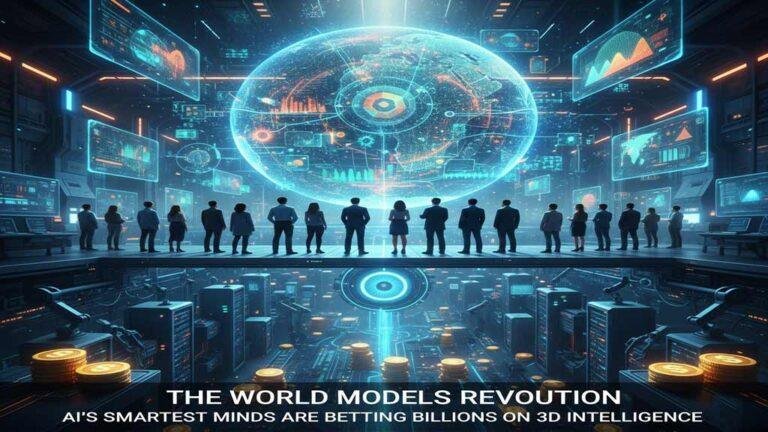

The World Models Revolution represents the next leap: AI that doesn’t just process text but builds internal representations of physical reality — models of how objects move, how forces interact, how cause and effect unfold in three-dimensional space. This is the architecture required for robotics, for autonomous vehicles, for systems that operate in the physical world rather than just the digital one.

The Infrastructure Nobody Talks About

The AI you interact with costs something. The energy and water crisis behind every ChatGPT query is real and growing. Data centers consume enormous quantities of electricity for computation and water for cooling. The energy grid is under siege as tech giants scramble to secure power at a scale the current infrastructure wasn’t built to support.

This connects to space technology in a direct way. Harvesting solar power from orbit — collecting energy above the atmosphere, where sunlight is constant and unfiltered, and beaming it to earth — is no longer science fiction. It’s an engineering challenge with active research programs. The economics of Starlink and SpaceX’s reusable launch system are what make this feasible: if you can put hardware in orbit cheaply enough, the calculus on space-based energy changes entirely.

The Quiet Rise of Local AI is the counter-movement. Smaller models that run on consumer hardware — a laptop, a home server — without sending your data to a corporation’s cloud. If you want to run an AI model locally on your own computer, the barrier is lower than most people realize. This matters for privacy, for cost, and for the long-term question of who controls the AI you use.

How New York City became one of the world’s top tech hubs is a story directly relevant to Long Island. The concentration of AI research, biotech, fintech, and climate technology in Manhattan and Brooklyn has consequences for housing, for the labor market, for the kinds of businesses that survive and the kinds that don’t. Smart city technology — AI and sensors managing transit, emergency services, utilities across eight million people — is already operational. It’s not a future scenario.

Space: The Context That Changes Everything

Science and technology don’t happen in a social vacuum, but they do happen in a physical one. The universe. And the human relationship to that universe is undergoing its first real revision since Copernicus.

Building the Moon isn’t a NASA poster. It’s a geopolitical project involving the United States, China, the European Space Agency, and private companies, each racing to establish a permanent presence on a body that has strategic and resource value. The James Webb Space Telescope is reading the atmospheric chemistry of planets orbiting other stars — looking for oxygen, water vapor, methane, the signatures of biology — with a precision that was unimaginable ten years ago.

The thermodynamics of asteroid interception — NASA’s DART mission proved we can alter the trajectory of a space rock — is not a metaphor. It’s an engineering discipline. The Stawell Underground Physics Laboratory is buried in a working gold mine in Australia, hunting for dark matter — the invisible substance that makes up roughly 27% of the universe’s mass and energy, which we have never directly detected despite its gravitational effects being measurable everywhere. This is what paradigm shifts in modern astrophysics look like from the inside: a generation of scientists building instruments to detect something they can’t see, in a mine, in a country most people couldn’t place on a map, because the physics demands it.

The Hard Questions That Connect All of It

Science and technology raise questions that don’t belong to any single discipline. They belong to everyone, because everyone is affected by the answers.

What is consciousness? The philosophical war between reductionism — the view that mind is just what brains do, fully explicable by neuroscience — and emergence — the view that mind is a property that arises from complex systems in ways that can’t be reduced to component parts — isn’t academic. It’s the question underlying every conversation about whether AI can be conscious, whether animals suffer, whether there is anything it is like to be something other than yourself. What happens to your digital life when you die? is a more mundane version of the same question: what are we, and what persists?

AI vs. your doctor is a question millions of people are already navigating, whether they frame it that way or not. The answer is nuanced and important: AI can pattern-match across more medical literature than any human can read, identify correlations in imaging data that radiologists miss, and flag drug interactions that fall through bureaucratic cracks. It cannot replace clinical judgment, contextual understanding of a patient’s whole life, or the human decision to push for a second opinion. Knowing the difference matters.

Can AI write a better resume than you? We tested it. The honest answer is: better at surface presentation, worse at authentic specificity. AI produces prose that looks polished and lands flat. The best resumes, like the best anything, require a point of view that no model trained on everyone else’s writing can fully supply.

The creative questions are as important as the practical ones. Copyright and the dataset — the legal war over whether AI training on human-made art constitutes infringement — will shape the economics of creativity for the next decade. The Algorithm and the Artist examines whether AI-generated images constitute art at all, or something categorically new that needs a different word. The uncanny valley of generative video — why AI video still looks subtly wrong to trained eyes, and what it would take to cross that threshold — is a technical question with enormous cultural stakes.

How to Think About All of This

Three principles.

First: context is not complexity. Understanding that AI grew out of evolutionary theory and statistical physics doesn’t require a PhD. It requires curiosity and a willingness to follow the thread. The thread is there. This blog has been pulling it for years — from the Extended Phenotype to the Fermi Paradox to the Rise of AI Agents — because the thread is one thread.

Second: the most important questions are not technical. Who controls the models. Who owns the data. How the benefits and costs distribute across society. Whether the speed of change leaves room for the communities, institutions, and relationships that make human life worth living. These are political, economic, and philosophical questions. Experts in machine learning are not automatically qualified to answer them. Neither are politicians who can’t explain what a neural network does. That gap is where the public conversation has to happen.

Third: being far from the center of technology does not mean being unaffected by it. Long Island is not Silicon Valley. Mount Sinai is not San Francisco. But the energy infrastructure question matters here. The real estate market is already being shaped by remote work patterns enabled by broadband and AI productivity tools. The tech corridor growing in Hudson Yards is forty miles from where you’re reading this. The startups building the future in NYC are hiring. The changes coming are not abstract. They are arriving on the North Shore the same way every economic shift has arrived: through the housing market, the job market, and the conversations people have at diners.

This guide exists to make those conversations better.

You Might Also Like

- The Rise of AI Agents: From Chatbots to Autonomous Problem Solvers

- The Boltzmann Brain Paradox: When Statistical Physics Predicts You Shouldn’t Exist

- DeepSeek: The AI Underdog That’s Shaking Up Silicon Valley

Sources

- Popper, K. (1959). The Logic of Scientific Discovery. Routledge. https://www.routledge.com/The-Logic-of-Scientific-Discovery/Popper/p/book/9780415278447

- Dawkins, R. (1982). The Extended Phenotype. Oxford University Press. https://global.oup.com/academic/product/the-extended-phenotype-9780192880512

- Boltzmann, L. (1895). On certain questions of the theory of gases. Nature, 51, 413–415. https://www.nature.com/articles/051413b0

- Hart, M.H. (1975). Explanation for the absence of extraterrestrials on Earth. Quarterly Journal of the Royal Astronomical Society, 16, 128–135. https://ui.adsabs.harvard.edu/abs/1975QJRAS..16..128H

- NASA DART Mission. (2022). DART Impact Results. https://dart.jhuapl.edu/

- James Webb Space Telescope. NASA. https://webb.nasa.gov/

- Anthropic. (2024). Claude: Model Card and System Prompt. https://www.anthropic.com/index/claude-2

- OpenAI. (2023). GPT-4 Technical Report. https://openai.com/research/gpt-4

- DeepSeek AI. (2025). DeepSeek-R1 Technical Report. https://arxiv.org/abs/2501.12948

- Stawell Underground Physics Laboratory. https://www.stawell.unimelb.edu.au/

- Kuhn, T.S. (1962). The Structure of Scientific Revolutions. University of Chicago Press. https://press.uchicago.edu/ucp/books/book/chicago/S/bo13179781.html

- LeCun, Y. (2022). A Path Towards Autonomous Machine Intelligence. Meta AI. https://openreview.net/pdf?id=BZ5a1r-kVsf