There is a question that sounds academic until it isn’t. How do you tell the difference between science and something that only dresses like science?

Karl Popper asked it in Vienna in the 1930s and gave it a name: the Abgrenzungsproblem. The demarcation problem. The challenge of drawing a line between genuine empirical inquiry and the theories that mimic its form without accepting its discipline. He spent his career on it. Philosophers have been arguing about his answer ever since. And the question has never been more urgent than it is right now — in an era when AI can generate peer-formatted misinformation at scale, when a quarter of the U.S. Congress denies climate science, and when supplements on late-night television get dressed up in the vocabulary of research.

Popper called it “the key to most of the fundamental problems in the philosophy of science.” He was not exaggerating.

What Popper Saw in Vienna

To understand why Popper built what he built, you have to understand what he was looking at. Vienna in the early twentieth century was a city stuffed with big ideas competing for the title of Truth. Freudian psychoanalysis on one side. Adlerian individual psychology. Marxist historical theory. All of them confident, all of them elaborate, all of them with an answer for everything. Then there was Einstein, who had just made a prediction so specific and so dangerous that if it turned out to be wrong, his entire framework would collapse: that light bends in the presence of massive gravitational bodies. Arthur Eddington confirmed it during the 1919 solar eclipse. The prediction held.

What Popper noticed was a structural difference, not just a difference of quality. Freud and Adler could explain any behavior. Whatever you brought them — love, violence, ambition, cowardice — they had a mechanism that accommodated it. The theory was never at risk. Marx operated the same way. History was always confirming the dialectic, one way or another. But Einstein had put his neck on the block. A single clean counter-example would have finished him.

That asymmetry became Popper’s demarcation criterion. A theory is scientific if it generates predictions that could, in principle, be proven false. If a theory is compatible with every possible observation — if it explains everything because it risks nothing — it is not science. It may be interesting, it may even be true in some sense, but it is not doing the work that science does.

This is what he called falsifiability, and he laid it out in The Logic of Scientific Discovery in 1934. The criterion sounds simple. The argument around it has been anything but.

The Problem with Falsification as a Bright Line

Popper’s critics were not wrong. They were just working on a harder version of the same problem.

The Duhem-Quine thesis, named for physicist Pierre Duhem and philosopher Willard Van Orman Quine, points to something real: no scientific hypothesis gets tested in isolation. Every experiment rests on a stack of auxiliary assumptions — the instruments are working, the background theory is sound, the sample hasn’t been contaminated. When a prediction fails, you always have the option of blaming the auxiliaries rather than the core theory. There is always somewhere to hide. Popper acknowledged this. He maintained that the logical criterion — the formal possibility of falsification — remained valid regardless. But critics pointed out that if every theory can survive any counter-evidence by adjusting its assumptions, the bright line starts to blur.

John D. Norton at the University of Pittsburgh has made the case that the criterion fails for a more fundamental reason: science is actually inductive at its core, and what distinguishes robust science from pseudoscience is not whether the theory is falsifiable but whether it is backed by deep, legitimate inductive support. On Norton’s view, plenty of bad science is technically falsifiable and has in fact been falsified — and yet it persists, because the people advancing it evade the falsification rather than accept it. What we identify as pseudoscience is characterized not by unfalsifiability per se, but by the absence of honest engagement with disconfirming evidence.

That is a more painful diagnosis. Because it puts the problem where it actually lives: not in the logic of the theory, but in the character of the people defending it.

Kuhn, Lakatos, and the Sociology of Scientific Life

Thomas Kuhn came at the problem from the history of science, and what he found was that Popper’s picture of scientists as perpetual falsifiers did not match what scientists actually did. Scientists working within an established paradigm do not spend their days trying to overturn it. They solve puzzles within its terms. They treat anomalies as problems to explain away, not as indictments of the core framework. When enough anomalies accumulate and the framework can no longer contain them, a paradigm shift occurs — but Kuhn argued this looks less like a rational verdict and more like a conversion experience. The old guard resists. The new generation inherits.

Popper found this account troubling. It seemed to reduce science to a sociology of belief rather than a logic of inquiry.

His student Imre Lakatos tried to split the difference. Lakatos replaced Popper’s isolated theory with the concept of the research programme: a sequence of theories held together by a hard core of non-negotiable commitments, surrounded by a protective belt of auxiliary hypotheses that can be modified to absorb anomalies without abandoning the center. The question for Lakatos was not whether a given theory is falsifiable, but whether a research programme is progressive or degenerating. A progressive programme keeps making novel predictions that get confirmed. A degenerating one just keeps patching its auxiliaries to survive counter-evidence, without generating anything genuinely new.

On this view, astrology is pseudoscientific not because it can’t be tested — it has been tested — but because it has failed repeatedly to generate confirmed novel predictions and has not responded to those failures in ways that expand its empirical reach. It degenerates. It does not grow.

This is a more historically realistic picture. It also has a built-in problem: a degenerating programme might always stage a comeback. The criterion is indefinitely deferred. You can never quite declare something dead.

Laudan and the Harder Question

Larry Laudan, in his 1983 essay “The Demise of the Demarcation Problem,” made what many consider the most devastating argument of all. He said that the entire project of finding a single necessary and sufficient criterion is doomed, and history proves it. Astrology is falsifiable and has been falsified. String theory, at least in certain formulations, makes no testable predictions. Creationism makes empirical claims. Psychoanalysis has been tested and found wanting. The boundaries of any proposed criterion always end up cutting the wrong way in enough cases to undermine it.

Laudan’s conclusion was that “demarcation problem” is a pseudoproblem — that the real questions are always about the specific quality of a specific theory’s evidence, not about whether it clears some categorical bar.

The philosophical community did not fully accept this. The courts certainly didn’t accept it. During the 1982 McLean v. Arkansas case — which challenged the teaching of creation science in public schools — philosopher Michael Ruse presented Popperian falsificationism to the court as a demarcation criterion. The judge ruled that creation science was not science. The argument worked, even if it was philosophically imprecise.

The lesson is not that demarcation criteria are useless. It’s that no single criterion is sufficient. Massimo Pigliucci and his collaborators, in the 2013 collection Philosophy of Pseudoscience, made the case for what they called a cluster approach: testability, responsiveness to evidence, openness to criticism, reproducibility, coherence with established knowledge. No one box checked. The whole pattern matters.

Why This Question Is Not Academic

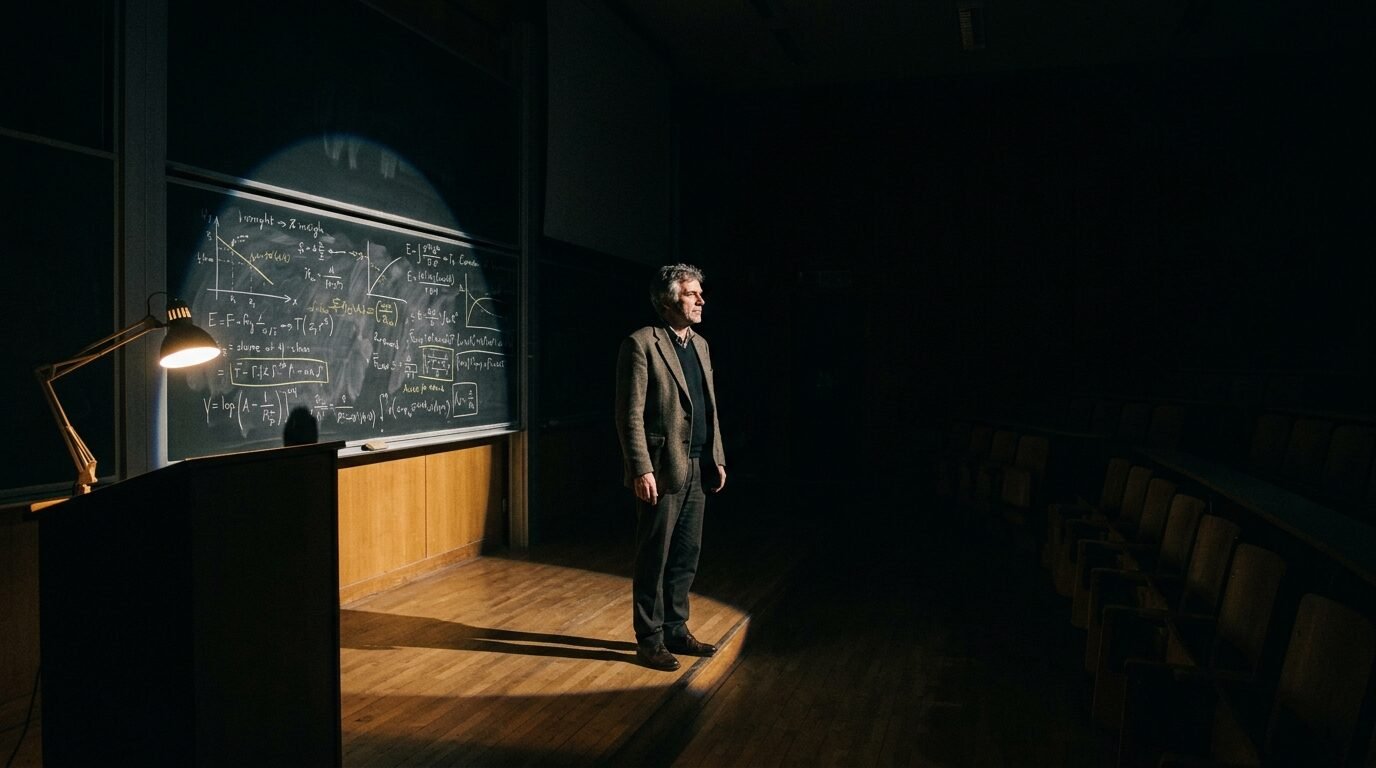

I spent years in graduate seminars working through exactly these arguments — first at Long Island University, then at The New School — and the conviction I came away with was not that Popper was right in every detail. It was that he was right about the thing that matters. The question “what evidence would change your mind?” remains the most clarifying thing you can ask anyone who is trying to tell you something is true.

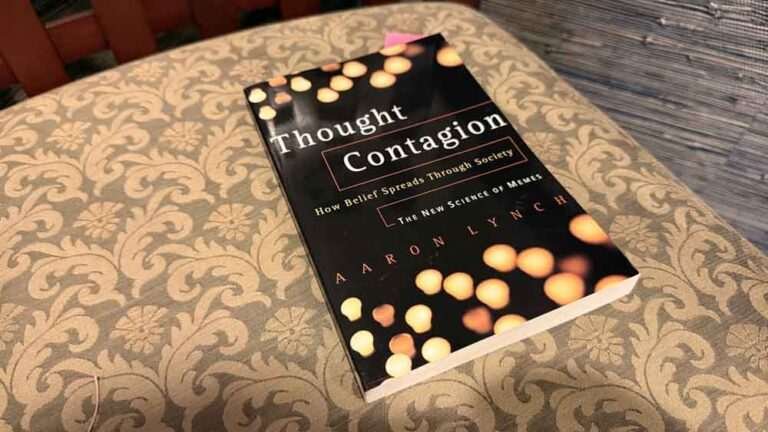

Right now, climate science denialism shares every structural feature with the pseudosciences Popper diagnosed: it fabricates controversies where genuine scientific consensus exists, cherry-picks data, and modifies its auxiliary hypotheses endlessly to resist disconfirmation. A 2024 count found that nearly a quarter of U.S. Congress qualified as climate change deniers. The epistemic damage is real and measurable.

Then add generative AI. A system that can produce grammatically flawless, citation-rich, peer-formatted text without any genuine empirical grounding. The demarcation problem is no longer a puzzle for philosophers. It is a design challenge, an institutional challenge, and ultimately a democratic one. Maintaining the conditions under which falsification can function — independent peer review, transparent methodology, open access to data, reproducible results — is not a passive academic exercise. It requires active defense. Lakatos understood this, even if his framework didn’t resolve every case: science is not just a set of criteria. It is a form of life that has to be maintained.

What Popper Actually Left Us

Falsifiability is not the whole of science. But the willingness to be falsified — the genuine, institutionally supported commitment to honest engagement with disconfirming evidence — is arguably its soul.

Popper never solved the demarcation problem in the sense of providing a clean algorithm. Neither did Lakatos. Neither did Laudan, despite his best arguments. The boundary between science and pseudoscience is not a wall but a gradient, maintained by a cluster of practices and a community committed to following them. What Popper gave us was not a solution but a question sharp enough to cut through almost anything: not just theories, but the intellectual character of whoever is defending them.

A theory that explains everything explains nothing. It has insulated itself from reality. Whether that theory concerns evolution, economics, or the supplements being sold during a late-night infomercial, the test remains the same.

What would make you wrong? And are you actually willing to find out?

You Might Also Like:

- Fuzzy Thinking by Bart Kosko — The Man Who Went After Aristotle and Had a Point

- Technopoly by Neil Postman — The Prophet Nobody Wanted to Hear

- The Fabric of Reality by David Deutsch — Knowledge Has No Ceiling, and That Should Change Everything

Sources

- Popper, K. (1959). The Logic of Scientific Discovery. Routledge.

- Popper, K. (1962). Conjectures and Refutations: The Growth of Scientific Knowledge. Basic Books.

- Lakatos, I. (1978). The Methodology of Scientific Research Programmes. Cambridge University Press.

- Kuhn, T.S. (1962). The Structure of Scientific Revolutions. University of Chicago Press.

- Laudan, L. (1983). The Demise of the Demarcation Problem. In R.S. Cohen & L. Laudan (Eds.), Physics, Philosophy and Psychoanalysis. Reidel.

- Pigliucci, M. & Boudry, M. (Eds.). (2013). Philosophy of Pseudoscience: Reconsidering the Demarcation Problem. University of Chicago Press.

- Stanford Encyclopedia of Philosophy: Karl Popper

- Stanford Encyclopedia of Philosophy: Science and Pseudo-Science

- Internet Encyclopedia of Philosophy: Pseudoscience and the Demarcation Problem

- Norton, J.D.: Why Falsifiability Does Not Demarcate Science from Pseudoscience

- Wikipedia: Falsifiability

- Aeon Essays: Imre Lakatos and the Philosophy of Bad Science

- Notre Dame Philosophical Reviews: Philosophy of Pseudoscience

- Wikipedia: Climate Change Denial