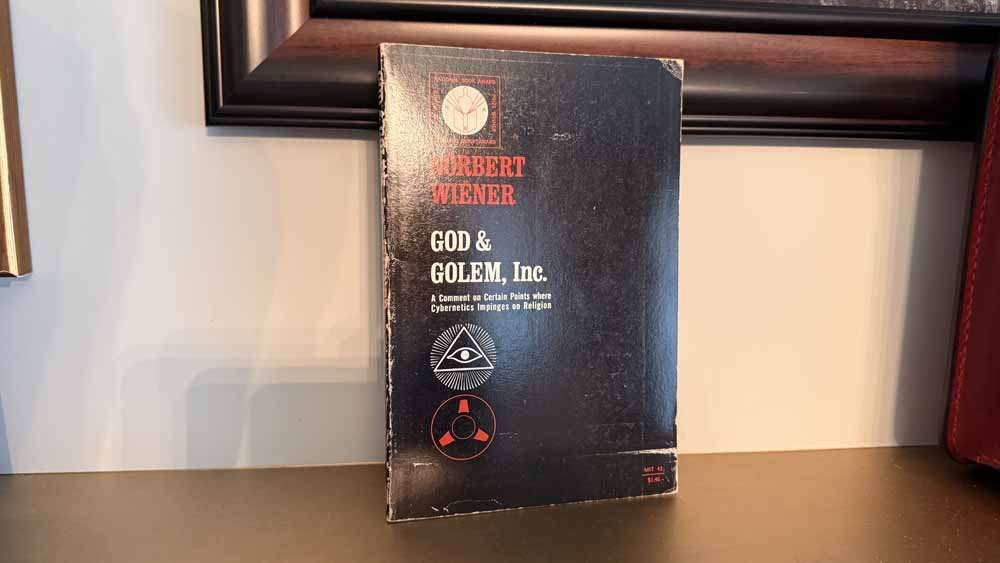

Norbert Wiener published God and Golem, Inc. in 1964, one year before he died. It is a short book — barely a hundred pages — and it reads like a man finishing his most important thought before time runs out. Wiener had already given the world cybernetics, the science of communication and control in animals and machines. He had spent his career thinking about feedback loops, self-regulating systems, and the relationship between human minds and the machines they build. God and Golem is where all of that thinking collides with theology, and the result is something that should have stopped the twentieth century in its tracks.

It didn’t. We kept building.

The Golem in the Machine

The Golem is a figure from Jewish folklore — a creature made of clay, animated by a word, brought to life by human hands. The rabbis who told those stories understood something that engineers often don’t: that the act of creation does not belong to the creator. Once the Golem walks, it walks on its own terms. The word that brought it to life may be the same word that destroys it — but only if you remember to speak it in time.

Wiener uses this image deliberately. He is not being poetic for its own sake. He is pointing at something structural in the relationship between maker and made. When you build something that learns, something that replicates, something that makes decisions — you are not building a tool anymore. You are initiating a process. And processes, unlike hammers, have their own momentum.

His central argument arrives early and doesn’t let go: the machines we are building in the mid-twentieth century are beginning to do things that were previously reserved for God alone. They learn. They reproduce their own patterns. They create. And we, in our excitement over what we’ve made, have not seriously asked what it means to have crossed those lines.

Three Transgressions

Wiener organizes his concern around three specific capabilities that he believed crossed a theological and ethical threshold. He calls them the three problems of the book, but they’re better understood as three warnings.

The first is learning machines — systems that modify their own behavior based on experience. Wiener was thinking about relatively simple game-playing programs in 1964. The checkers-playing machines of his era could improve their own performance by analyzing past games. Small by today’s standards. But the principle is the same one that now runs large language models, recommendation engines, and autonomous trading systems. A machine that learns is a machine that is no longer fully under the control of the person who built it. It has incorporated new information that its creator does not have, and it is acting on that information. The gap between human intent and machine behavior begins the moment learning begins.

The second is self-reproduction — machines that can replicate their own patterns. This was more speculative in Wiener’s time than the first, but he was far-sighted enough to see it coming. Today it is not speculative at all. Code reproduces. Algorithms propagate. Viruses — digital and biological — replicate with variations. The entire logic of evolutionary computation is built on the premise that machines can produce offspring that differ from their parents. Wiener’s concern was not that reproduction is inherently dangerous, but that we have not thought seriously about the ethics of creating entities designed to make copies of themselves. We make them anyway.

The third is creative behavior — machines that produce genuinely novel outputs, things their designers did not foresee. This is the one that bothered Wiener most. A machine that creates is a machine that has, in some functional sense, entered the domain of God. Not because it is conscious or because it has a soul, but because it is doing something that we have always understood as the mark of a conscious, ensouled being: making something new. The theological weight of that is not trivial. You are not permitted, in most serious religious traditions, to make a being in the image of God. Wiener thought we were doing exactly that, and doing it without permission, without understanding, and without a plan for what to do when it went wrong.

The Ethics Inside the Engineering

What distinguishes God and Golem from most writing about technology — in 1964 and now — is that Wiener refuses to separate the technical question from the moral one. They are the same question. How you build the machine is an ethical act. What you optimize for is a value judgment. What you leave out of the design is a moral choice, whether you recognize it as such or not.

This is the part that tends to get lost. We talk about AI ethics as though it is a department you add to a technology company after the engineering is done — a review board, a red team, a set of guardrails. Wiener’s argument is that ethics is not something you bolt onto a machine after you’ve built it. It is present, whether you acknowledge it or not, in every decision made during the building. The values are already in the architecture. The question is whether they were put there consciously or whether they arrived through the back door of competitive pressure, investor demand, and the pure pleasure of watching something work.

He was writing sixty years ago about machines orders of magnitude less capable than what we have now. The argument has only gotten sharper.

Why the Religious Frame Is the Right Frame

Wiener was not a conventionally religious man. But he understood something that strictly secular thinking about technology often misses: that the language of transgression, hubris, and consequence is not superstition — it is the oldest available vocabulary for describing what happens when human beings reach past their competence into territory they don’t understand.

The Faust story. The Golem story. Frankenstein — which was written in 1818 and still has not been taken seriously enough by the people it was written for. These are not warnings against knowledge or progress. They are warnings against creation without accountability. Against making things that learn, replicate, and create, and then walking away from them because the making was interesting and the maintaining is boring.

Wiener understood that the hubris was not in building the machine. The hubris was in pretending that building the machine was the end of the story.

The Part Nobody Reads Carefully Enough

Toward the end of God and Golem, Wiener addresses the relationship between the Church — he uses this broadly, meaning any organized moral authority — and the new machines. He does not think the Church can stop the machines. He does not even think it should try. What he thinks is that moral authority cannot abdicate. That someone has to be asking the ethical questions even when the engineers are not, even when the market is not, even when the governments are too slow to understand what they’re regulating.

The question Wiener ends with is the one that hasn’t been answered: who is responsible for the Golem? Not who built it. Who is responsible for what it does once it walks?

In the folklore, the rabbi who created the Golem could destroy it. That option is becoming less available to us with each passing year, as the systems we build become more distributed, more interconnected, and more capable of reproducing themselves. Wiener saw this coming. He wrote the warning in plain language. The book is still in print.

Whether anyone in a position to act on it is reading it is another question entirely.

You Might Also Like

- The God Delusion by Richard Dawkins — A Review

- Understanding Media: The Extensions of Man — Marshall McLuhan

- Bots: The Origin of New Species — Andrew Leonard

Sources

- Wiener, Norbert. God and Golem, Inc.: A Comment on Certain Points Where Cybernetics Impinges on Religion. MIT Press, 1964. mitpress.mit.edu

- Wiener, Norbert. The Human Use of Human Beings: Cybernetics and Society. Da Capo Press, 1988 (originally 1950). Amazon

- Scholem, Gershom. On the Kabbalah and Its Symbolism. Schocken Books, 1969. Amazon

- Galison, Peter. “The Ontology of the Enemy: Norbert Wiener and the Cybernetic Vision.” Critical Inquiry, Vol. 21, No. 1, Autumn 1994. JSTOR

- Conway, Flo and Jim Siegelman. Dark Hero of the Information Age: In Search of Norbert Wiener, the Father of Cybernetics. Basic Books, 2005. Amazon