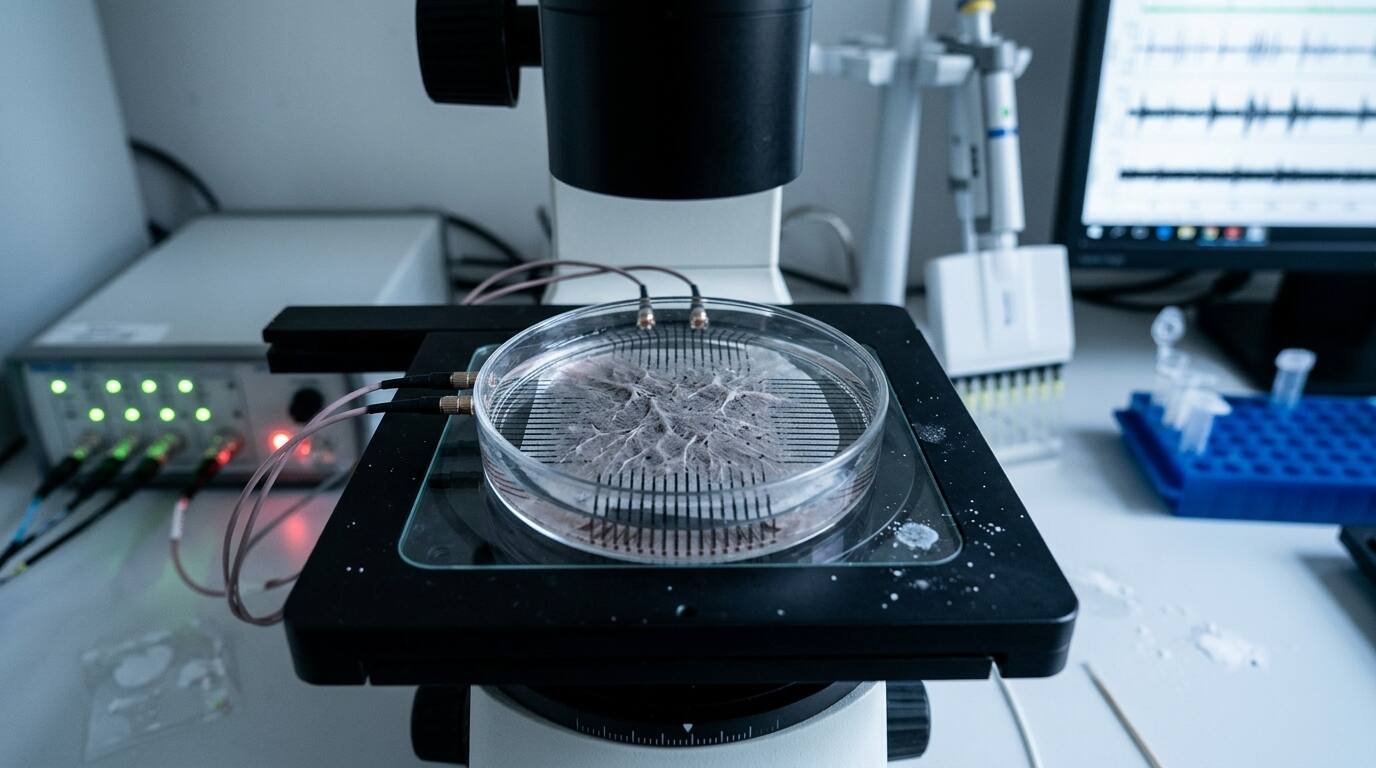

In October 2022, a paper published in Neuron by Brett Kagan and colleagues at Cortical Labs in Melbourne described a system they called DishBrain: approximately 800,000 human cortical neurons, derived from induced pluripotent stem cells and cultured on a multi-electrode array, that had been trained to play a simplified version of Pong. The neurons received sensory input through electrical stimulation indicating the position of the ball relative to the paddle. They controlled the paddle’s movement through their own electrical activity. They learned — in the sense that their performance improved measurably over time, using a free energy minimization framework rather than reward-based reinforcement.

The paper was precise about what it had and had not shown. It had not shown that the neurons were conscious. It had not demonstrated subjective experience of any kind. What it had demonstrated was that a biological neural network, assembled from human cells in a culture dish, exhibits adaptive learning behavior in response to structured environmental feedback.

That demonstration was enough to make a lot of people very uncomfortable, for reasons that the field of bioethics is still working to articulate.

What Brain Organoids Are

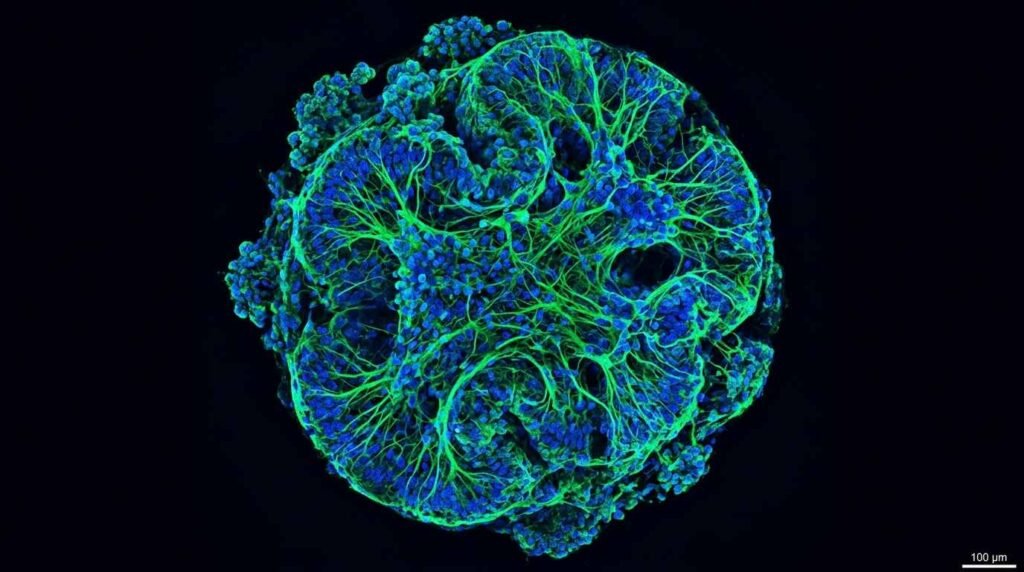

Brain organoids are three-dimensional clusters of neural tissue grown from pluripotent stem cells — cells that can develop into many types, including neurons. Unlike the two-dimensional cell cultures used in most neuroscience laboratory work, organoids self-organize into structures with spatial architecture: distinct layers, different cell types in specific arrangements, spontaneous electrical activity that resembles in some respects the activity of developing brain tissue.

The DishBrain system was not precisely an organoid in the technical sense — it used dissociated cortical neurons rather than a self-organized three-dimensional structure. But the terminology of “organoid intelligence” has been adopted broadly to cover both formats, and the Johns Hopkins initiative announced in 2023 explicitly uses organoids as its primary model system.

The distinction between dissociated neuron cultures and true organoids matters scientifically because organoids exhibit higher levels of structural complexity, including something resembling the cortical layering of developing human brain tissue. This complexity makes them more useful as models of human neurology — and makes the ethical questions about their status more pressing.

The Johns Hopkins Organoid Intelligence Initiative

In February 2023, a team at Johns Hopkins University published a roadmap paper in Frontiers in Science, authored by Smirnova and colleagues, announcing the Organoid Intelligence (OI) initiative. The paper framed OI as a potential alternative computational substrate to silicon-based computing — one that might offer advantages in energy efficiency, adaptability, and modeling biological processes.

The roadmap identified specific research milestones: developing organoids capable of learning tasks, integrating them with machine learning systems, and scaling their complexity toward structures with greater computational capacity. It also, unusually for a technical research roadmap, included a substantial section on ethical considerations — acknowledging that the initiative was operating in territory where existing bioethical frameworks were insufficient.

The paper’s language on sentience was careful but direct. The authors noted that organoids currently lack the structural complexity, vascular system, immune function, and sensory inputs present in the intact brain, and that present organoids show no evidence of sentience by any current scientific measure. They also noted that as complexity increases, the question of moral status becomes one that the field needs frameworks to address before the experiments outpace the ethics.

What “Sentience Threshold” Debates Are Actually About

The question of whether brain organoids can suffer is not, in the current scientific literature, a claim that they do. It is a question about what criteria should be used to determine if they might, and whether those criteria exist before or after the complexity threshold is crossed.

The philosophical challenge is that the markers used to assess sentience in other animals — behavioral responses to noxious stimuli, neuroanatomical structures associated with pain processing in humans, evolutionary relatedness — do not map cleanly onto an in vitro neural tissue culture. DishBrain’s neurons respond to electrical stimulation and adjust their behavior. That is not the same as nociception. It is also not clearly categorically different from the most basic forms of sensory response.

Commentary published in Nature in 2023, responding to the OI initiative, described the ethical questions in terms of moral uncertainty rather than moral conclusions. Several bioethicists argued that the appropriate framework is not to wait until sentience is established and then develop protections, but to develop precautionary guidelines now, when the complexity remains below any plausible sentience threshold, so that the research community is not making ad hoc ethical decisions under time pressure as capabilities scale.

The regulatory question is correspondingly underdeveloped. Current FDA oversight of biological research does not include frameworks specifically designed for neural tissue cultures exhibiting learning behavior. Institutional review board protocols are designed for human subjects research. The gap — biological material derived from human cells, exhibiting behavioral characteristics, in a regulatory framework designed for neither category — is precisely where organoid intelligence research currently operates.

What the Research Is Actually Good For

The practical scientific value of brain organoids and DishBrain-type systems is substantial and largely uncontroversial, which is part of why the research is proceeding despite the unresolved ethical questions.

Organoids derived from the stem cells of patients with Alzheimer’s, ALS, autism spectrum conditions, and schizophrenia allow researchers to study the cellular and circuit-level mechanisms of those conditions in human neural tissue — something impossible with mouse models, which do not replicate human neurological disease with sufficient fidelity. Drug candidates can be tested on disease-specific organoids before clinical trials. Circuit-level questions about how human cortical neurons process information can be investigated in ways that in vivo human research cannot permit.

These applications are already producing results. Organoid models of glioblastoma have been used in drug screening. Autism-associated organoids have shown specific synaptic differences that correlate with known genetic risk variants. The Johns Hopkins OI roadmap frames the computational applications as secondary to these medical research functions.

The computational applications — organoids as biological computing substrates — remain substantially more speculative. The energy and space efficiency arguments relative to silicon computing require organoids to scale to complexities far beyond current capability, and the integration of biological and electronic systems at the required resolution is an unsolved engineering problem. DishBrain playing Pong is a proof of concept. It is not a demonstration that biological computation is ready to compete with silicon at any useful scale.

You Might Also Like: The Latent Space: How Diffusion Models Translate Text into Visuals | Waymo’s 150,000 Paid Rides Per Week