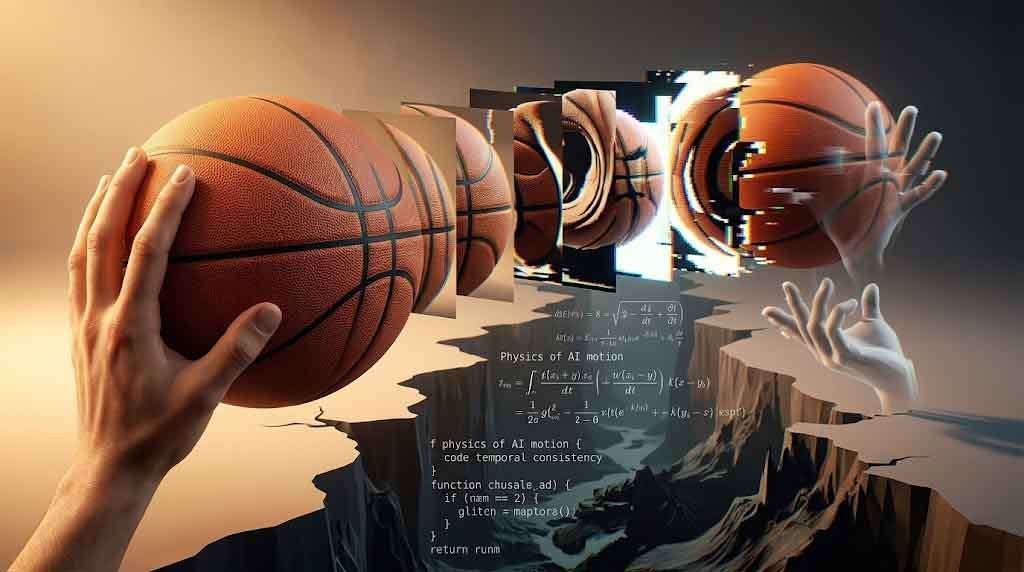

Watch a basketball spinning on a fingertip in an AI-generated video and something quietly goes wrong. The ball spins, reverses itself for no reason, then carries on as though physics never governed the moment. Your eye catches it before your brain names it — a subtle wrongness, a flicker of biological alarm. The frame is gorgeous. The lighting is cinematic. But the object doesn’t obey. And in that single violation of physical law, the illusion collapses.

This is the frontier where generative AI video lives in 2025: extraordinary at the surface, deeply unreliable beneath it. The industry has spent three years solving the visual half of the problem — lighting, texture, facial fidelity, resolution — while the temporal half, the dimension of time itself, keeps exposing the seams.

The Valley Is Older Than We Think

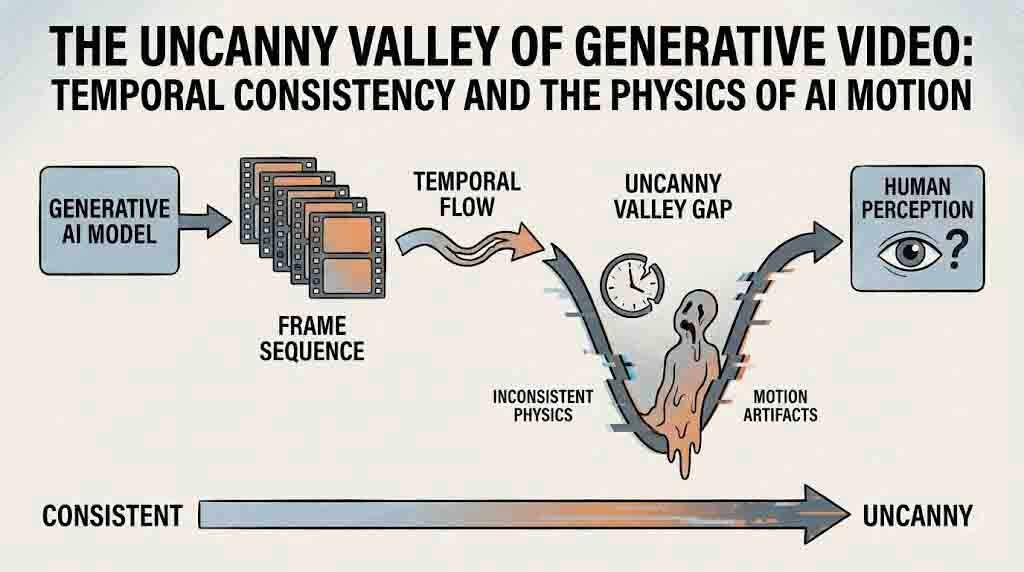

In 1970, robotics professor Masahiro Mori of the Tokyo Institute of Technology published a brief but durable essay in the Japanese journal Energy. He observed that as robots became increasingly humanlike in appearance, people’s affinity for them rose — until a certain threshold. Just short of true human likeness, affinity didn’t plateau. It plummeted into what he termed bukimi no tani: the uncanny valley. A robot doing a passable job at seeming human wasn’t experienced as a robot at all, but as an abnormal human — and that mismatch triggered revulsion rather than empathy (Mori, 1970; IEEE Spectrum, 2012).

Mori also noted something that feels prophetic today: the valley is steeper for moving objects than for still ones. A corpse is unsettling. A zombie is terrifying. Movement intensifies the uncanny. The moment you animate something that almost-lives, the failures in its motion become existentially disturbing.

That insight maps precisely onto the current state of AI-generated video. A single frame generated by Sora 2, Runway Gen-4, or Google Veo 3 can be indistinguishable from professional photography. But the moment the clip begins to move — when time becomes the variable — the machinery of wrongness activates. Mori’s valley turns out not to be a metaphor for AI video. It is its operating condition.

What “Temporal Consistency” Actually Means

The term sounds technical and bloodless, but temporal consistency is simply the promise that objects remain coherent through time. That a coffee cup on a table doesn’t quietly drift three inches between frames. That a character’s jawline doesn’t subtly reshape itself mid-conversation. That a hand picking up a pen doesn’t flicker with extra fingers on frame 47 before correcting itself on frame 48.

In human vision, temporal inconsistency is processed as error — often before conscious recognition. The brain predicts where an object should be based on the frame before, and any violation of that prediction registers as alarm. This is predictive coding in action: the nervous system is continuously constructing a model of the world and checking incoming visual data against it. When AI video breaks physics, it’s not just aesthetically imperfect. It’s triggering low-level threat-detection hardware that evolved to notice when something in the environment is behaving impossibly.

Researchers evaluating AI video quality have formalized this into measurable dimensions: spatial consistency (does the object look the same frame-to-frame?), temporal coherence (does it move plausibly?), and motion quality (does the physics track?). A February 2025 survey from arXiv, covering the full landscape of spatiotemporal consistency research, notes that most current video generation systems excel at spatial fidelity — individual frames look right — but fundamentally struggle with temporal coherence across frames and precise physical plausibility (Yin et al., 2025, arXiv:2502.17863).

The problem is structural. Early diffusion models were trained on images. They learned what the world looks like, not how it behaves. When those models were extended into video by adding temporal attention layers — essentially teaching them to hallucinate coherent sequences of images — they inherited the image model’s blindness to physics. They learned to approximate motion by observing how things had moved in training data, without internalizing the laws that govern why they move that way.

The Jitter Problem and the Architecture That Made It Worse

Before 2025, the failure mode was obvious and unglamorous. Early AI video suffered from what the industry simply called “jitter” — generated elements would morph, flicker, or drift inconsistently from one frame to the next. A character’s face would shift between frames. Background objects drifted and warped. Physics-based motion appeared artificial. The result was video that announced its synthetic origin in the first two seconds of playback.

This wasn’t a cosmetic problem. It was an architectural one. Diffusion-based video models generate video by predicting each frame in relation to neighboring frames, but most models of the 2023–2024 era could only produce 16 frames per sampling pass without additional scaffolding (FancyVideo, IJCAI-25). Longer sequences were assembled by stitching these short windows together — and the joints showed. Objects didn’t just flicker. They accumulated errors. A character’s hand in frame 1 looked fine. By frame 64, it had quietly migrated.

The “floaty physics” problem — objects that don’t have weight, don’t obey momentum, don’t respond to friction — plagued every model in this period. Runway Gen-3 Alpha, released in early 2025, made a significant leap in coherence and prompt adherence, but still produced physics violations in fast-motion or multi-object scenes. Academic evaluation frameworks like VBench broke video quality into 16 dimensions — including object consistency, background consistency, motion smoothness, and dynamic range — and consistently found that “AI models often optimize static features at the expense of dynamic consistency” (DEVIL framework, cited in PMC, 2025).

The commercial models that debuted in 2024 and early 2025 were generating objectively beautiful images in sequence. They had just never learned how a basketball understands a spinning finger.

Sora, Veo, Runway: Where the Physics Still Breaks

Sora 2, OpenAI’s flagship text-to-video model released in September 2025, represented a genuine benchmark shift. Its fluid dynamics are often described as impressive — honey pouring onto toast, smoke reacting to airflow — and its scene intelligence is real: it understands that actions should cause reactions, that one event should logically precede the next. For longer, more cinematic sequences, it leads the market.

And yet the basketball still reverses direction.

Hands playing piano show fingers not quite connecting to keys. Cards being shuffled merge and morph. Fine motor manipulation — anything requiring a model to understand how fingers and small solid objects interact — remains a persistent failure zone (StarupHub.ai, 2025). The model can render a convincing room, a convincing face, convincing rain. But the precise physics of granular interaction, where two objects meet and transfer force according to Newtonian law, still breaks at a frequency that removes it from the category of reliable.

Google Veo 3 is praised for its camera semantics and film-style movement simulation. Runway Gen-4’s December 2025 release specifically marketed “infinite character consistency” as a core capability, achieving it through improved temporal attention mechanisms that track object identity across the entire clip duration. Runway also integrated physics-informed world understanding — gravity calculations for falling objects, fluid dynamics principles for water flow, cloth draping and folding on character models — and according to professional cinematographers, Gen-4 output can pass for traditional CGI in many commercial contexts (AIFilms.ai, January 2026).

But “passing for CGI” is not the same as simulating physics. CGI is also hand-crafted. What these models are being praised for is the difficulty in telling AI-generated video from human-made synthetic video — not from reality. The uncanny valley hasn’t been crossed. It’s been repositioned. The comparison class has shifted from “does this look real” to “does this look like something a skilled artist made.” That is progress, but it’s not resolution.

The Architectural Shift: When Models Started Learning to Think in 3D

The most significant structural development of 2025 was the integration of Neural Radiance Fields (NeRFs) and Gaussian Splatting within video diffusion models — a change in how AI systems conceptualize space itself.

Traditional video diffusion models think in 2D. They generate flat sequences of images and use temporal attention to make them cohere. NeRFs and Gaussian Splatting give a model a different kind of memory: a persistent, volumetric representation of a scene that exists in three dimensions and can be rendered from any viewpoint. Instead of asking “what does frame 47 look like given frame 46?”, a model with a 3D representation can ask “given that this object occupies this position in space, where should it be in the next frame?”

This distinction is not subtle. A model that reasons from 2D statistics about motion will generate plausible motion in statistically common situations. A model that reasons from 3D spatial relationships will generate correct motion in physically constrained situations, because it knows where the object is, not just what the object looks like.

NVIDIA’s research lab published “Lyra” in late 2025, a framework that distills the implicit 3D knowledge inside video diffusion models into explicit 3D Gaussian Splatting representations — enabling real-time rendering and scene generation with genuine spatial coherence (NVIDIA Research, ICLR 2026). Runway’s research documentation describes Gen-4’s physics-informed world understanding as simulating real-world constraints at the point of generation rather than approximating them from visual data. According to a January 2026 analysis by Towards AI, this shift — integrating NeRFs and Gaussian splatting within video diffusion models — represents a fundamental change in how AI systems understand and generate video content (AIFilms.ai, January 2026).

The practical result is a generation of models that don’t just look at temporal consistency as a post-hoc editing problem, but build it into the architecture of how a scene is understood before the first frame is generated. Whether that fully solves the physics problem remains to be proven across edge cases. But it changes the question from “how do we fix the jitter?” to “how do we teach the model about gravity?” The second question is more interesting, and probably more tractable.

The Human Threshold and Why It Matters for Creators

In late 2025, benchmarks suggested that 95% of viewers could not distinguish high-quality AI-generated video from authentic footage (AIVideoDetector.org, 2025). That number deserves some skepticism — it depends heavily on the specific clips, the viewing conditions, and what “authentic footage” means — but the directionality is real. For social media clips, short-form commercial content, and B-roll, the perceptual gap has largely closed.

For anything requiring sustained physical plausibility — a human hand doing detailed work, water behaving precisely, multiple objects interacting with realistic force transfer — the gap remains measurable and practically relevant. Documentary-quality footage. Medical visualization. Legal or forensic video. These domains require not just visual credibility but physical verifiability.

The implication for creators is bifurcated. If your use case is atmosphere, narrative motion, establishing shots, and stylized abstraction, today’s AI video tools are genuinely production-ready. Sora 2, Veo 3, and Runway Gen-4 have crossed a threshold for that class of work. If your use case requires physics that an expert would examine frame by frame — a surgeon watching a procedure simulation, an engineer reviewing a failure analysis, a lawyer inspecting evidence — these tools are not yet operating in that register.

The uncanny valley for AI video is less about eeriness and more about reliability. The question that 2025 answered is whether AI video can generate footage that looks real. The question that 2026 is beginning to answer is whether AI video can generate footage that is real — physically accurate, temporally honest, and trustworthy across the entire duration of a clip. That question is considerably harder. It requires the model not just to learn what motion looks like, but to understand why motion happens.

Physics Is Not a Style

The deepest issue with AI video’s temporal consistency problem is philosophical, not technical. When a model hallucinates a basketball reversing direction, it isn’t making an aesthetic choice. It isn’t choosing a stylized physics for expressive effect. It’s simply wrong — not about appearance, but about the structure of reality.

This is what distinguishes physics from style. A model can learn to generate footage that looks like Kubrick or Cassavetes or Agnès Varda. Cinematographic style is pattern, and patterns can be learned from data. But Newtonian mechanics are not a style. Gravity is not a preference. The conservation of momentum is not a training distribution. It is a constraint on what is allowed to happen, and no amount of perceptual plausibility can substitute for it when the application requires truth.

The frontier work on NeRF and Gaussian Splatting integration moves in the right direction precisely because it begins to give models a framework for constraint rather than just pattern. A model that has internalized a 3D representation of a scene can be asked to respect physical laws as boundary conditions. The basketball doesn’t reverse direction not because the model has learned that basketballs usually don’t reverse, but because the model understands that conservation of angular momentum doesn’t permit it.

That transition — from learned statistics about how the world looks to principled reasoning about how the world works — is the real uncanny valley. It’s not a valley of aesthetics. It’s a valley of epistemology. And crossing it will require AI systems that don’t just observe physics but reason within it.

Masahiro Mori, who died in January 2025 at 97, spent the final years of his career writing about robotics and Buddhist philosophy. He believed that to build machines in the image of life, you first had to understand what life was. The insight translates. To generate video that moves with the conviction of reality, you don’t need a larger training set. You need a model that knows why things fall.

Sources

- Mori, M. (1970). Bukimi no Tani (The Uncanny Valley). First English translation authorized by Mori, published in IEEE Robotics & Automation Magazine, June 2012. https://spectrum.ieee.org/the-uncanny-valley

- Kageki, N. (2012). An Uncanny Mind: Masahiro Mori on the Uncanny Valley and Beyond. IEEE Spectrum. https://spectrum.ieee.org/an-uncanny-mind-masahiro-mori-on-the-uncanny-valley

- Yin, Z., et al. (2025). A Survey: Spatiotemporal Consistency in Video Generation. arXiv:2502.17863. https://arxiv.org/abs/2502.17863

- PMC / National Center for Biotechnology Information. (2025). A Perspective on Quality Evaluation for AI-Generated Videos. https://pmc.ncbi.nlm.nih.gov/articles/PMC12349415/

- StarupHub.ai. (2025). Sora 2: A Glimpse into Generative Video’s Uncanny Valley and Creative Frontier. https://www.startuphub.ai/ai-news/ai-video/2025/sora-2-a-glimpse-into-generative-videos-uncanny-valley-and-creative-frontier/

- AIFilms.ai. (January 2026). Sora 2 and Runway Gen-4 Solve AI Video’s Biggest Problem. https://studio.aifilms.ai/blog/sora-2-runway-gen-4-solve-jitter-problem-2026

- AIVideoDetector.org. (2025). AI Video Generation Tools 2025: Sora vs Runway vs Pika. https://www.aivideodetector.org/blog/ai-video-generation-tools-comparison-2025

- NVIDIA Research / Bahmani et al. (2026). Lyra: Generative 3D Scene Reconstruction via Video Diffusion Model Self-Distillation. ICLR 2026. https://research.nvidia.com/labs/toronto-ai/lyra/

- FancyVideo: Towards Dynamic and Consistent Video Generation via Cross-frame Attention. IJCAI 2025. https://www.ijcai.org/proceedings/2025/1120.pdf

- Clippie.ai. (2025). Recap: The Best AI Video Creation Trends from 2025. https://clippie.ai/blog/ai-video-creation-trends-2025-2026

- Dunham, K. (2025). How to Actually Control Next-Gen Video AI: Runway, Kling, Veo, and Sora Prompting Strategies. Medium. https://medium.com/@creativeaininja/how-to-actually-control-next-gen-video-ai-runway-kling-veo-and-sora-prompting-strategies-92ef0055658b