One does not need to travel to the Galápagos to observe speciation in action — one need only watch a population of neural architectures compete, reproduce, and perish inside a GPU cluster over the course of an afternoon.

The observation is not figurative. What is transpiring in the research laboratories of Uber AI, Google Brain, and OpenAI is not the borrowing of biological language to describe computational phenomena. It is the implementation — literal, mechanistic, and mathematically rigorous — of the same principles Charles Darwin set forth in On the Origin of Species in 1859. The mechanism is identical. A population varies. Variations compete under environmental pressure. The unfit perish. The fit reproduce, transmitting their characteristics to the next generation. Over sufficient iterations, the population drifts toward forms better suited to the prevailing conditions.

That the environment in question is a loss function rather than a savannah, and that reproduction occurs through parameter copying rather than biological inheritance, changes the substrate but not the logic.

The Lineage Begins: NEAT and the First Artificial Speciation

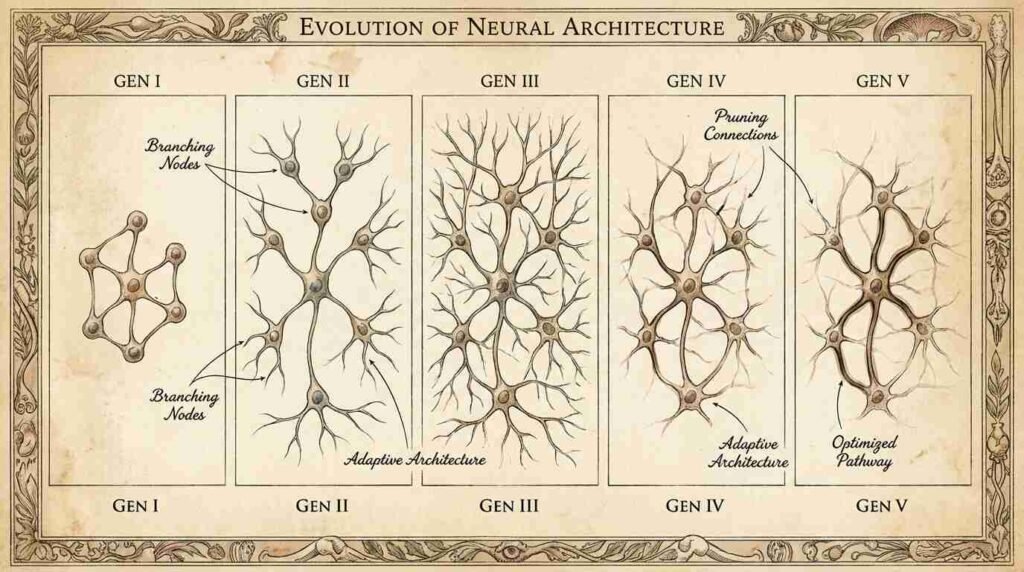

The formal genealogy of machine learning’s evolutionary turn begins, for practical purposes, with Kenneth Stanley and Risto Miikkulainen’s 2002 paper in Evolutionary Computation: “Evolving Neural Networks through Augmenting Topologies.” The algorithm they described — NEAT, for Neuroevolution of Augmenting Topologies — addressed a problem that had defeated earlier attempts at evolving neural networks: how to meaningfully compare and recombine networks of different structures. Prior approaches had attempted evolution on fixed topologies, varying only weights. Stanley and Miikkulainen’s insight was that structure itself should be subject to selection — that networks should be permitted to grow more complex over generations, with historical markers called innovation numbers allowing the algorithm to track which structural elements shared common ancestry.

This is speciation by another name. The innovation number is the algorithm’s equivalent of a cladistic marker — a means of determining, when two individuals are crossed, whether their shared structures descend from a common source or arose independently. NEAT has been in continuous development since 2002, and the lineage it established runs directly into contemporary research. To read the paper today is to recognize, in its meticulous attention to population dynamics and reproductive fitness, the same instincts that animated Darwin’s notebooks aboard the Beagle.

For readers interested in the philosophical foundations of evolutionary thinking that underpin this work, the review of Darwin’s Dangerous Idea by Daniel Dennett here examines how the algorithmic nature of natural selection — Dennett’s great contribution — made computation’s adoption of it almost inevitable.

The Uber Experiment: When Evolution Outran Reinforcement Learning

In 2017, Uber AI Labs released a paper that attracted significant attention in machine learning circles, though it was received with some puzzlement by those wedded to the prevailing orthodoxy of reinforcement learning. The paper, “Evolution Strategies as a Scalable Alternative to Reinforcement Learning,” demonstrated that evolutionary approaches could match or exceed reinforcement learning performance on benchmark tasks — including the notoriously challenging MuJoCo continuous control environments and Atari games — while offering substantial advantages in parallelization.

The significance of this result is worth dwelling upon. Reinforcement learning, particularly the policy gradient methods that had produced celebrated results in game-playing AI, operates by having a single agent accumulate experience and update its parameters accordingly — a mechanism more analogous to individual learning than to evolutionary selection. Evolution strategies, by contrast, maintain a population of parameter vectors, evaluate each on the task, and construct the next generation by perturbation and selection among the successful. The population is the unit of adaptation. No individual accumulates experience across episodes; what persists is the distribution from which individuals are drawn, shifting generation by generation toward higher-fitness regions of parameter space.

The Uber result suggested that for many problems, the collective pressure of population-level selection was a more efficient teacher than individual experience. Darwin, who had puzzled over the relationship between individual variation and species-level change, would have found this an agreeable confirmation of his framework’s generality.

AutoML-Zero: Evolution Without a Hypothesis

Google Brain’s 2020 contribution to this lineage — published at ICML under the title “AutoML-Zero: Evolving Machine Learning Algorithms From Scratch” — represented a more radical application of the evolutionary principle. Where previous neuroevolution approaches had evolved the parameters or architectures of neural networks, AutoML-Zero evolved the learning algorithms themselves. The system began with no assumption about what a machine learning algorithm should look like. It was given only primitive mathematical operations — vector addition, element-wise multiplication, gradient computation — and tasked with assembling these into programs that could solve machine learning problems.

The programs competed. The unfit were eliminated. The fit reproduced, with random mutations introducing variation. Over many generations, the system independently discovered mechanisms recognizable to any machine learning practitioner: gradient descent, regularization procedures, normalization schemes. It discovered these not because they were specified, but because they were fit — because programs containing these structures outperformed programs that did not, under the selective pressure of the benchmark.

This is precisely the kind of result that forces a philosophical reckoning. The mechanisms of machine learning that researchers developed over decades of deliberate inquiry — mechanisms that required considerable intelligence to conceive — were rediscovered by a process that exercised no intelligence at all, only selection. Darwin reached an analogous conclusion about the eye. Its intricate adaptation to the function of vision requires no designer, only time and differential survival. AutoML-Zero extends this logic: intricate mathematical machinery requires no mathematician, only variation and selection applied with sufficient patience.

Fitness Landscapes and the Problem of Local Optima

The correspondence between biological evolution and neuroevolution is not, it must be acknowledged, without complications. Both processes share a fundamental vulnerability: the problem of local optima, or in biological terms, the problem of adaptive peaks as formulated by Sewall Wright in the 1930s. A population may climb to a fitness peak — a configuration superior to its immediate neighbors — and remain stranded there, unable to cross the valley of lower fitness that separates it from a higher peak elsewhere in the landscape.

Neuroevolutionary algorithms have developed responses to this problem that parallel biological mechanisms with some precision. Niching — the maintenance of distinct subpopulations exploring different regions of the fitness landscape — corresponds to geographic isolation and allopatric speciation. In NEAT, this takes the form of explicit species within the population, groups of sufficiently similar individuals that compete primarily against one another rather than the entire population. This protects innovation: a newly emerged structural novelty, which may perform poorly in the short term, is sheltered within its species long enough for subsequent mutations to realize its potential. It is, in miniature, the same logic by which geographic isolation on an island permits a variant population to evolve without being immediately outcompeted by the more numerous parent species.

The review of The Selfish Gene by Richard Dawkins here examined how Dawkins extended Darwin’s logic to the gene level — a perspective that maps cleanly onto neuroevolution’s treatment of individual parameters as the units competing for representation in the next generation’s population.

The Selective Environment and Its Pressures

Any evolutionary system is defined as much by its environment as by its population. In biological evolution, the environment is everything — climate, predation, resource availability, competition from other species. In neuroevolution, the environment is the benchmark, the loss function, the task distribution against which individuals are evaluated. And here the analogy becomes epistemologically interesting, because the choice of benchmark is a human decision, one that shapes the direction of evolutionary pressure with the same consequence that climate shapes the direction of biological adaptation.

A population of neural architectures evolved to maximize performance on ImageNet will diverge, over generations, from a population evolved on language modeling tasks — just as populations of the same ancestral species, separated by an ocean and subjected to different ecological pressures, will diverge into forms adapted to their respective environments. The benchmark is the island. The loss function is the climate.

This observation has practical consequences. Systems evolved under narrow task distributions may fail to generalize — they are, in ecological terms, specialists adapted to a particular niche, vulnerable to extinction if the niche changes. The challenge of building general-purpose evolved systems parallels the challenge of explaining, in biology, the emergence of generalist species capable of thriving across varied environments. It is not resolved merely by expanding the benchmark; it requires, as in nature, the application of varied and unpredictable selective pressures across generations.

What Variation Actually Means in Silicon

Darwin’s theory rested on three observations: that individuals vary, that variation is heritable, and that variation affects fitness. All three conditions hold in neuroevolution, but the mechanism of variation — mutation — deserves examination, because it operates quite differently from biological mutation and the difference is instructive.

Biological mutation is largely random and largely deleterious. The genetic code, refined over billions of years, is dense with functional consequence; most random changes to it destroy something. Neuroevolutionary mutation, by contrast, can be structured: architectural mutations that add nodes or connections (NEAT’s structural mutations) are designed to add rather than corrupt, introducing new computational pathways rather than degrading existing ones. This is closer to gene duplication — a known mechanism of evolutionary innovation, whereby a redundant copy of a gene is free to accumulate mutations without loss of the original function — than to point mutation.

The implication is that neuroevolution, even when it operates by an explicitly Darwinian mechanism, is not constrained to replicate biology’s exact implementation of that mechanism. It inherits the logic — variation, selection, inheritance — while adapting the details to its substrate. This is, one notes, precisely what biology itself does: the mechanism of natural selection is conserved across all life, while its implementation varies from organism to organism with extraordinary creativity.

You Might Also Like

Sources

- Kenneth O. Stanley & Risto Miikkulainen, “Evolving Neural Networks through Augmenting Topologies,” Evolutionary Computation (2002): https://nn.cs.utexas.edu/downloads/papers/stanley.ec02.pdf

- Esteban Real et al., “AutoML-Zero: Evolving Machine Learning Algorithms From Scratch,” Google Brain, ICML (2020): https://arxiv.org/abs/2003.03384

- Uber AI Labs, “Evolution Strategies as a Scalable Alternative to Reinforcement Learning” (2017): https://arxiv.org/abs/1703.03864

- Sewall Wright, “The Roles of Mutation, Inbreeding, Crossbreeding and Selection in Evolution,” Proceedings of the Sixth International Congress of Genetics (1932)